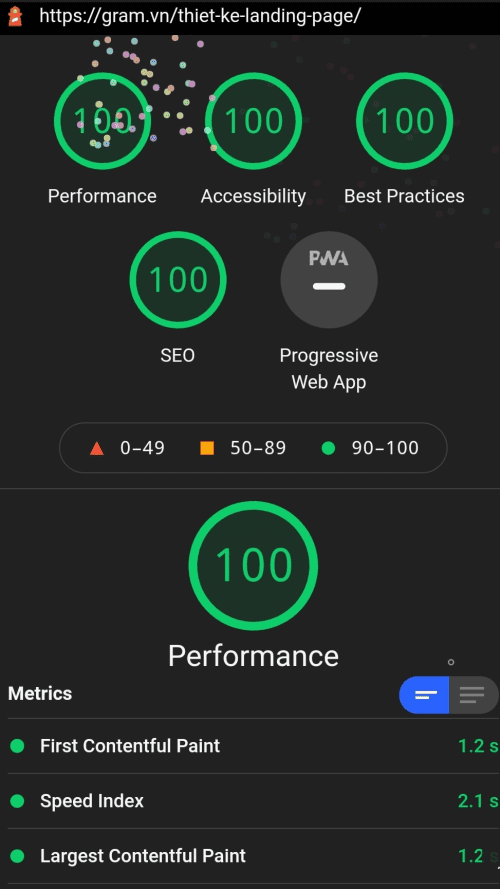

Discussion 2: CWV: Performance: 98 | Accessibility: 100 | Best Practices: 100 | SEO: 100 | Do this Strategy!

Andrei

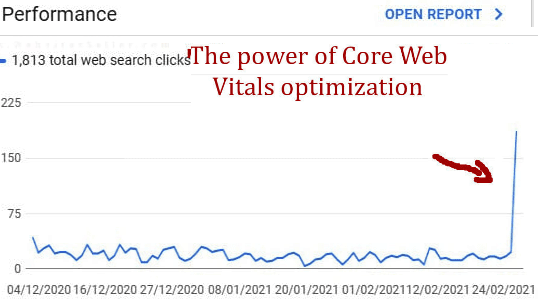

Preparing For Google's Core Web Vitals Update (already got some positive results):

Core Web Vitals report: Performance: 98 | Accessibility: 100 | Best Practices: 100 | SEO: 100

Here is what I did:

I. Bad links cleanup (disavow tool)

II. SEO:

1. Has a <meta name="viewport"> tag with width or initial-scale;

2. Document has a <title> element;

3. Document has a meta description;

4. Page has successful HTTP status code;

5. Links have descriptive text;

6. Page isn't blocked from indexing;

7. robots.txt is valid;

8. Document has a valid hreflang;

9. Document has a valid rel=canonical;

10. Document uses legible font sizes – 100% legible text;

11. Document avoids plugins;

III. Accessibility

1. [aria-*] attributes match their roles;

2. [aria-*] attributes have valid values;

3. [aria-*] attributes are valid and not misspelled;

4. Buttons have an accessible name;

5. The page contains a heading, skip link, or landmark region;

6. Background and foreground colors have a sufficient contrast ratio;

7. Document has a <title> element;

8. [id] attributes on the page are unique;

9. <html> element has a [lang] attribute;

10. <html> element has a valid value for its [lang] attribute;

11. Form elements have associated labels;

12. Links have a discernible name;

13. Lists contain only <li> elements and script supporting elements (<script> and <template>);

14. List items (<li>) are contained within <ul> or <ol> parent elements;

15. [user-scalable="no"] is not used in the <meta name="viewport"> element and the [maximum-scale] attribute is not less than 5.;

IV. Best Practices:

1. Avoids Application Cache;

2. Uses HTTPS;

3. Uses HTTP/2 for its own resources;

4. Uses passive listeners to improve scrolling performance;

5. Avoids document.write();

6. Links to cross-origin destinations are safe;

7. Avoids requesting the geolocation permission on page load;

8. Page has the HTML doctype;

9. Avoids front-end JavaScript libraries with known security vulnerabilities;

10. Detected JavaScript libraries;

11. Avoids requesting the notification permission on page load;

12. Avoids deprecated APIs;

13. Allows users to paste into password fields;

14. No browser errors logged to the console;

15. Displays images with correct aspect ratio;

V. Performance:

1. Eliminate render-blocking resources;

2. Properly size images;

3. Defer offscreen images;

4. Minify CSS;

5. Minify JavaScript;

6. Efficiently encode images;

7. Serve images in next-gen formats;

8. Enable text compression;

9. Preconnect to required origins;

10. Server response times are low (TTFB): Root document took 30 ms;

11. Avoid multiple page redirects;

12. Preload key requests;

13. Use video formats for animated content;

14. Avoids enormous network payloads: Total size was 273 KB;

15. Uses efficient cache policy on static assets: 0 resources found;

16. Avoids an excessive DOM size: 562 nodes;

17. User Timing marks and measures;

18. JavaScript execution time: 0.7 s;

19. All text remains visible during webfont loads;

64 👍🏽 6 💟 70

Thanks. This is exactly what I was looking for. But I have one question. Do you have ads on that page?

No ads

Silva

That was my guess. Can you share your previous score? I guess I'm going to try that with ads.

Andrei ✍️

Previous score wasn't saved, unfortunately, but it was much lower than the actual one. Basically, if you follow the roman numbers ( starting with the do disavow, if it necessary, abd so on) you will get positive results

Silva

Thanks! Who wouldn't love a 4x increase in revenue? But this does look like it'll give me a LOT of work.

Andrei ✍️

Idk if it helps, but take a look at this Reddit's thread (

https://www.reddit.com/r/bigseo/comments/idjvvx/im_a_developer_studying_core_web_vitals_how_much/g2b4wzx/?utm_source=reddit&utm_medium=web2x&context=3 ) – I'm on mobile, idk if it's working for you. It is regarding site speed, Google measurements and Google's servicesReddit.COM

I'm a developer studying Core Web Vitals. How much do you think vitals will change SEO and performance monitoring?

Silva

Thanks. It does help. Unfortunately, the thread is archived.

Mike

That's a Lighthouse report, not a Core Web Vitals report. CWV are a small part of what Lighthouse reports on. 95% of what you did has nothing to do with Core Web Vitals.

Just wanted to point that out for people learning about CWV.

🤯💟👍🏽12

I was gonna say, it frequently can spike if you've recently made changes to the site. You have to track across more time to see any result.

Patricio

I was just gonna point that out! Thanks, Mike. 😀 I am wondering, is Pagespeed Insights powered by Lighthouse? Because I don't think they have the same results. Or unless Pagespeed Insights is Lighthouse + something else? I feel like people know already but I may have missed the memo 😂🤦🏻♀️

Andrei ✍️ » Mike

Yes and no.

Yes, it's a Lighthouse report (this is the link, if anybody else is curious:

https://lighthouse-ci.appspot.com/try ). I also tested with web.dev, Chrome User Experience (UX) rep, PageSpeed Insights and, ofc, watched the Core Web Vitals (CWV) from the Google Search Console (GSC), and everything is very good.No, regarding this "95% of what you did has nothing to do with Core Web Vitals.". The site speed is one of the most important factors when we talk about Core Web Vitals. Quoting JohnMu (see the Reddit link in one of my replies):"Ultimately, users don't care whose components you use on your site — ** slow is slow **(…)".

Of course, as I mentioned in the title, I am just preparing for the May update. Only after the May's update I'll see what is good and what isn't, where and what to improve, etc. . But, like anyone else, I am still learning a lot and want the best for my sites.

Mike » Andrei

Core Web Vitals is not page speed. Those are two different things you are conflating. Core Web Vitals is LCP, FID, and CLS. Those 3 metrics. That's it.

Andrei ✍️ » Mike

This is what I said, too. The site speed is an important factor of the May update. CWV (LCP, FID and CLS) are the newest metrics.

https://twitter.com/googlesearchc/status/1326192937164705797Google Search Central on Twitter

Tagez » Mike

i am reading all comments and i am finally confused. To me CWV – metrics indeed. Lighthouse and pagespeed – tools to measure , including CWV. And give some recommends. Also i thought that pagespeed is a short extract from lighthouse (performance part). No?

Andrei ✍️ » Tagez

Here is the best explanation regarding CWV (directly from JohnMu):

https://youtu.be/ggpZA5U2rZk?t=135Core Web Vitals and SEO

Casey

I had a bunch of our agency's websites see a huge spike in organic traffic at the end of last months from traffic bots. Our data saw these same spikes for four different accounts around the same time as this spike. Did your traffic stay that high in the week since this data was collected? Huge spikes like this are usually artificial. If your traffic since has dropped it was likely a bot. In Google Analytics (GA), Check your sources/medium and keywords for trafficbot(dot)live, bot-traffic(dot)xyz and free-traffic(dot)xyz. (Note these are all URLs but community standards don't allow me to put the URLs directly, and for good reason)

Thank you, Casey! It decreased since the last screenshot, but it's above average. Those are not bots. Basically, I received most of the boost on a few old articles (over 60% on one of them). Beside the optimization I made, I suspect that the bad links clean-up has a big impact, that's why I put it on the top of my list. Isuru posted a comment above with the term "Spike of hope", term I know about since few years ago, and (because the boost wasn't steady), he's not far from the truth. But, at least, I solved this little part of the puzzle.

Huấn

Mine 😊

That's impressive! Congratulations and keep the good job!

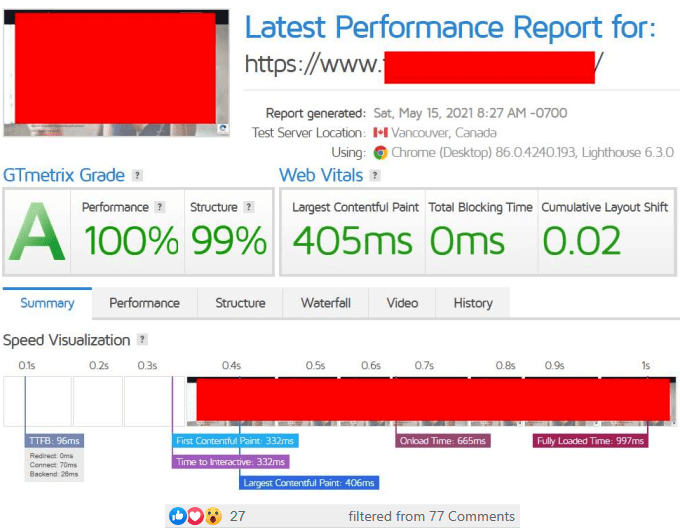

Discussion 1: Some Nuggets to Achieve a Green Latest Performance Report on GTmetrix: Grade a, Performance 100%, Structure 99%, TBT 0ms

Michael Pedrotti 🎓

Some speed optimization on one of my sites. Managed to get it fully loaded in under 1 second. Super image heavy site with a big hero image, with 6 full blog images query loaded too. No nitropack or image Content Delivery Network (CDN) here either!

23 👍🏽 2 💟 2 🤯 27

[filtered from 77 💬🗨]

Michael Pedrotti 99 for both mobile and desktop on Google Page speed insights? It's impossible by using real optimizations method with a WordPress website. You delete everything and just leave Google recaptcha on the page and your website speed score will drop below 99 on PageInsights tool. You've no control over external scripts.

Spoiler Alert:

There are methods to trick page speed measure tools to show higher scores and one of them is to use the user agent property of browsers to show a screenshot to page speed measure tools. Others include loading scripts only when user scroll the page or after a fixed amount of time (Nitropack is using this) etc. etc.

Spoiler Alert 2:

Check speed optimization so called Gurus on Fiverr, Upwork and Facebook, 90% of them will hide the website name when they are sharing their work. Why? Why? Because the websites either show good scores only for a few days or the website link can reveal the bad method they have used to deceive the clients.

CONCLUSION:

Don't run after 100/100 scores on GooglePage Speed insights or GTMetrix and other tools. Use these tools to find the areas that you can improve including optimizing images, scaling images, fixing Cumulative Layout Shift (CLS), enable cache, minify scripts and Cascading Style Sheets (CSS), remove any possible unused scripts, css… That's all on the technical side.. Now focus on user experience, User experience is the main thing you should focus on. Again, Don't run after 100/100 on Speed measure tools, people are looting you and destroying your website Search Engine Optimization (SEO) with so called speed optimizations. Check Youtube.com in PageSpeed insights and you'll never get 90+ score for the Google owned video platform Youtube.com but is still the most famous video platform in the world.

It's most definitely possible and with with good User Experience (UX). And like I said in my opening post, I'm not using nitro pack. You can search this group on my thoughts about using nitro pack. If you can't get those scores then you don't know what you are doing.

Hiding the URL is for protection. You have obviously not been in the business long enough otherwise you would know about how bad negative SEO is.

If you look back in this group you will see I have been doing lots of speed related stuff for a while now.

Slim

No image CDN either? damn that's good. What did you do edit images one by one? Good job btw. Bragging rights 😉

No image CDN. It's part of our process to tiny png all images before they are uploaded. It's good practice. And then from there we use their plugin to optimize all different sizes of the images. After testing everything we have not found anything that retains quality and compresses images as well as tinypng. We could get extra gains by going to webp but we prefer compatibility right now.

Slim

does webp fall short somewhere? I've never heard of an incompatbility regarding webp?

Michael Pedrotti ✍️🎓 » Slim

With safari yes it doesn't work. So you need a fallback.

Socaş

Bro…I ain't no hater… Big congratulations! But if there is everything server backend .. you could share a link for us to get some deeper thoughts / insights 🧐 … 🤷

There is no real guide on setting up a server. This is just something I have been doing for a long time. But ill do my best to break it down.

1. First thing is make sure you have a server on a decent network. Literally skimping on hosting and going with the cheapest is the worst thing you can do. At the very least they want to have memcached and redis already installed on the server. Most real hosts have this. If you're setting up your own server, make sure its dedicated.

2. Use a edge network, a real edge network not just free Cloudflare, and not just for images. Spend some money here. This will also make sure your site isn't taken down by some kid using a free booter.

3. Install a default WordPress install with whatever you need to connect to the memcached/redis servers correctly first.

4. Use loader.io to test if everything is setup correctly. A rule of thumb is 1000 users at once should only add 200ms load to your page times. If you're not getting these numbers, you need to go back to the drawing board before you proceed.

^^^ IS THE MOST CRITICAL, ANYONE CAN GET GOOD SCORES WHEN NO ONE IS ON THE WEBSITE! MAKE SURE ITS LOAD TESTED TOO!

4. Keep your plugins lean and mean and NO themeforest themes. Audit how heavy the code is, and swap out things accordingly. For example, use Oxygen builder instead of Elementor, and Rank Math instead of Yoast etc. Just be aware if you REALLY need that plugin.

5. Have systems in place for images, such as resizing and optimizing before you upload them to the server. For this tinypng and their plugin is the best out there to retain PNG. The easy way out is WEBP but its not compatible with safari on apple yet. Just be aware of this. Still, best practice is to have everything correctly sized for where its placed anyway.

There are plugins out there like Nitropack, but I no longer recommend or use them myself. They have issues beyond just tricking page speed.

I hope this points you in the right direction.

💟1

Socaş

Thanks bro! I do study a lot lately the server setup… I know there is the magic door 🙂 I highly appreciate sharing infor… I will try the setup… I will also start using slickstack? As server setup for WordPress … Did you get into it?

Michael Pedrotti ✍️🎓 » Socaş

Nope. I either do it manually or use virtualmin. Virtualmin is very dated though.

Socaş

I've read that LEMP stack servers is the Saint Graal 🙂 it is beta indeed …https://slickstack.io/ open source 🙂 . That's cool we can compare 🙂 you go that way and I look forward to setup a slickstack on some Cloud …

SLICKSTACK.IO

SlickStack | Fastest WordPress Stack 2021

Gie

Nice one, Michael Pedrotti! Do you run ads on your site?

Is this setup attainable on a site using Ezoic?

I use Oxygen Builder on WPX hosting, and got 90+ both mobile and desktop. But as soon as I used Ezoic, it went down 🙁

I have no ads on my sites. They are usually 100% affiliate.