Dal

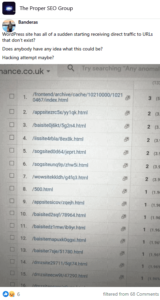

What are people using to block bots these days? E.g Ahrefs, Majestic, SEMrush etc.

There are some scripts and some stuff in robots.txt.

✍️ Got a link?

Nihil » Dal

https://www.semrush.com/bot/?sscid=71k4_5kdyn&utm_source=berush&utm_medium=sas&utm_campaign=314743&utm_term=1537039SEMRUSH.COM.

SEMrush Bot | SEMrush.

SEMrush Bot | SEMrush.

Sean » Kevin

and his team released link silencer.

Have a gander.

https://linksilencer.com – completely free of charge. Enjoy! 🙂.Link Silencer LINKSILENCER.COM

Anthony

User-agent: AhrefsBot

User-agent: AhrefsSiteAudit

User-agent: adbeat_bot

User-agent: Alexibot

User-agent: AppEngine

User-agent: Aqua_Products

User-agent: archive.org_bot

User-agent: archive

User-agent: asterias

User-agent: b2w/0.1

User-agent: BackDoorBot/1.0

User-agent: BecomeBot

User-agent: BlekkoBot

User-agent: Blexbot

User-agent: BlowFish/1.0

User-agent: Bookmark search tool

User-agent: BotALot

User-agent: BuiltBotTough

User-agent: Bullseye/1.0

User-agent: BunnySlippers

User-agent: CCBot

User-agent: CheeseBot

User-agent: CherryPicker

User-agent: CherryPickerElite/1.0

User-agent: CherryPickerSE/1.0

User-agent: chroot

User-agent: Copernic

User-agent: CopyRightCheck

User-agent: cosmos

User-agent: Crescent

User-agent: Crescent Internet ToolPak HTTP OLE Control v.1.0

User-agent: DittoSpyder

User-agent: dotbot

User-agent: dumbot

User-agent: EmailCollector

User-agent: EmailSiphon

User-agent: EmailWolf

User-agent: Enterprise_Search

User-agent: Enterprise_Search/1.0

User-agent: EroCrawler

User-agent: es

User-agent: exabot

User-agent: ExtractorPro

User-agent: FairAd Client

User-agent: Flaming AttackBot

User-agent: Foobot

User-agent: Gaisbot

User-agent: GetRight/4.2

User-agent: gigabot

User-agent: grub

User-agent: grub-client

User-agent: Go-http-client

User-agent: Harvest/1.5

User-agent: Hatena Antenna

User-agent: hloader

User-agent: http://www.SearchEngineWorld.com bot

User-agent: http://www.WebmasterWorld.com bot

User-agent: httplib

User-agent: humanlinks

User-agent: ia_archiver

User-agent: ia_archiver/1.6

User-agent: InfoNaviRobot

User-agent: Iron33/1.0.2

User-agent: JamesBOT

User-agent: JennyBot

User-agent: Jetbot

User-agent: Jetbot/1.0

User-agent: Jorgee

User-agent: Kenjin Spider

User-agent: Keyword Density/0.9

User-agent: larbin

User-agent: LexiBot

User-agent: libWeb/clsHTTP

User-agent: LinkextractorPro

User-agent: LinkpadBot

User-agent: LinkScan/8.1a Unix

User-agent: LinkWalker

User-agent: LNSpiderguy

User-agent: looksmart

User-agent: lwp-trivial

User-agent: lwp-trivial/1.34

User-agent: Mata Hari

User-agent: Megalodon

User-agent: Microsoft URL Control

User-agent: Microsoft URL Control – 5.01.4511

User-agent: Microsoft URL Control – 6.00.8169

User-agent: MIIxpc

User-agent: MIIxpc/4.2

User-agent: Mister PiX

User-agent: MJ12bot

User-agent: moget

User-agent: moget/2.1

User-agent: mozilla

User-agent: Mozilla

User-agent: mozilla/3

User-agent: mozilla/4

User-agent: Mozilla/4.0 (compatible; BullsEye; Windows 95)

User-agent: Mozilla/4.0 (compatible; MSIE 4.0; Windows 2000)

User-agent: Mozilla/4.0 (compatible; MSIE 4.0; Windows 95)

User-agent: Mozilla/4.0 (compatible; MSIE 4.0; Windows 98)

User-agent: Mozilla/4.0 (compatible; MSIE 4.0; Windows NT)

User-agent: Mozilla/4.0 (compatible; MSIE 4.0; Windows XP)

User-agent: mozilla/5

User-agent: MSIECrawler

User-agent: naver

User-agent: NerdyBot

User-agent: NetAnts

User-agent: NetMechanic

User-agent: NICErsPRO

User-agent: Nutch

User-agent: Offline Explorer

User-agent: Openbot

User-agent: Openfind

User-agent: Openfind data gathere

User-agent: Oracle Ultra Search

User-agent: PerMan

User-agent: ProPowerBot/2.14

User-agent: ProWebWalker

User-agent: psbot

User-agent: Python-urllib

User-agent: QueryN Metasearch

User-agent: Radiation Retriever 1.1

User-agent: RepoMonkey

User-agent: RepoMonkey Bait & Tackle/v1.01

User-agent: RMA

User-agent: rogerbot

User-agent: scooter

User-agent: Screaming Frog SEO Spider

User-agent: searchpreview

User-agent: SEMrushBot

User-agent: SemrushBot

User-agent: SemrushBot-SA

User-agent: SEOkicks-Robot

User-agent: SiteSnagger

User-agent: sootle

User-agent: SpankBot

User-agent: spanner

User-agent: spbot

User-agent: Stanford

User-agent: Stanford Comp Sci

User-agent: Stanford CompClub

User-agent: Stanford CompSciClub

User-agent: Stanford Spiderboys

User-agent: SurveyBot

User-agent: SurveyBot_IgnoreIP

User-agent: suzuran

User-agent: Szukacz/1.4

User-agent: Szukacz/1.4

User-agent: Teleport

User-agent: TeleportPro

User-agent: Telesoft

User-agent: Teoma

User-agent: The Intraformant

User-agent: TheNomad

User-agent: toCrawl/UrlDispatcher

User-agent: True_Robot

User-agent: True_Robot/1.0

User-agent: turingos

User-agent: Typhoeus

User-agent: URL Control

User-agent: URL_Spider_Pro

User-agent: URLy Warning

User-agent: VCI

User-agent: VCI WebViewer VCI WebViewer Win32

User-agent: Web Image Collector

User-agent: WebAuto

User-agent: WebBandit

User-agent: WebBandit/3.50

User-agent: WebCopier

User-agent: WebEnhancer

User-agent: WebmasterWorld Extractor

User-agent: WebmasterWorldForumBot

User-agent: WebSauger

User-agent: Website Quester

User-agent: Webster Pro

User-agent: WebStripper

User-agent: WebVac

User-agent: WebZip

User-agent: WebZip/4.0

User-agent: Wget

User-agent: Wget/1.5.3

User-agent: Wget/1.6

User-agent: WWW-Collector-E

User-agent: Xenu's

User-agent: Xenu's Link Sleuth 1.1c

User-agent: Zeus

User-agent: Zeus 32297 Webster Pro V2.9 Win32

User-agent: Zeus Link Scout

Disallow: /👍18

robots.txt.👍3

Patrick

Does this work with .htaccess?

Anthony

https://pastebin.com/W1EhVmSa.RewriteEngine On RewriteCond %{REQUEST_URI}!/robots.txt$ RewriteCond %{HTTP_U – Pastebin.com.

<textarea>

RewriteEngine On.

RewriteCond %{REQUEST_URI}!/robots.txt$.

RewriteCond %{HTTP_USER_AGENT} ^$ [OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EventMachine.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NerdyBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Typhoeus.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*archive.org_bot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*archive.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*adbeat_bot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*github.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*chroot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Jorgee.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Go\ 1.1\ package.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Go-http-client.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Copyscape.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*semrushbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*semrushbot-sa.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*JamesBOT.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SEOkicks-Robot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LinkpadBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*getty.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*picscout.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*AppEngine.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Zend_Http_Client.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BlackWidow.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*openlink.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*spbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Nutch.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Jetbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebVac.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Stanford.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*scooter.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*naver.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*dumbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Hatena\ Antenna.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*grub.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*looksmart.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebZip.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*larbin.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*b2w/0.1.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Copernic.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*psbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Python-urllib.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NetMechanic.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*URL_Spider_Pro.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*CherryPicker.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ExtractorPro.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*CopyRightCheck.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Crescent.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*CCBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SiteSnagger.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ProWebWalker.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*CheeseBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LNSpiderguy.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EmailCollector.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EmailSiphon.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebBandit.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EmailWolf.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ia_archiver.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Alexibot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Teleport.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*MIIxpc.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Telesoft.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Website\ Quester.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*moget.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebStripper.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebSauger.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebCopier.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NetAnts.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Mister\ PiX.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebAuto.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*TheNomad.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WWW-Collector-E.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*RMA.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*libWeb/clsHTTP.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*asterias.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*httplib.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*turingos.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*spanner.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Harvest.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*InfoNaviRobot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Bullseye.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebBandit.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NICErsPRO.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Microsoft\ URL\ Control.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*DittoSpyder.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Foobot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebmasterWorldForumBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SpankBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BotALot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*lwp-trivial.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebmasterWorld.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BunnySlippers.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*URLy\ Warning.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LinkWalker.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*cosmos.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*hloader.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*humanlinks.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LinkextractorPro.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Offline\ Explorer.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Mata\ Hari.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LexiBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Web\ Image\ Collector.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*woobot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*The\ Intraformant.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*True_Robot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BlowFish.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SearchEngineWorld.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*JennyBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*MIIxpc.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BuiltBotTough.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ProPowerBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BackDoorBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*toCrawl/UrlDispatcher.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebEnhancer.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*suzuran.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebViewer.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*VCI.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Szukacz.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*QueryN.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Openfind.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Openbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Webster.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EroCrawler.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LinkScan.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Keyword.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Kenjin.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Iron33.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Bookmark\ search\ tool.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*GetRight.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*FairAd\ Client.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Gaisbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Aqua_Products.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Radiation\ Retriever\ 1.1.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Flaming\ AttackBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Oracle\ Ultra\ Search.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*MSIECrawler.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*PerMan.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*searchpreview.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*sootle.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Enterprise_Search.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Bot\ mailto:craftbot@yahoo.com.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ChinaClaw.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Custo.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*DISCo.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Download\ Demon.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*eCatch.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EirGrabber.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EmailSiphon.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EmailWolf.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Express\ WebPictures.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ExtractorPro.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*EyeNetIE.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*FlashGet.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*GetRight.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*GetWeb!*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Go!Zilla.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Go-Ahead-Got-It.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*GrabNet.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Grafula.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*HMView.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*HTTrack.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Image\ Stripper.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Image\ Sucker.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Indy\ Library.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*InterGET.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Internet\ Ninja.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*JetCar.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*JOC\ Web\ Spider.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*larbin.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*LeechFTP.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Mass\ Downloader.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*MIDown\ tool.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Mister\ PiX.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Navroad.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NearSite.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NetAnts.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NetSpider.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Net\ Vampire.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*NetZIP.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Octopus.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Offline\ Explorer.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Offline\ Navigator.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*PageGrabber.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Papa\ Foto.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*pavuk.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*pcBrowser.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*RealDownload.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*ReGet.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SiteSnagger.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SmartDownload.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SuperBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SuperHTTP.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Surfbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*tAkeOut.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Teleport\ Pro.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*VoidEYE.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Web\ Image\ Collector.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Web\ Sucker.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebAuto.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebCopier.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebFetch.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebGo\ IS.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebLeacher.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebReaper.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebSauger.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*wesee.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Website\ eXtractor.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Website\ Quester.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebStripper.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebWhacker.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebZIP.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Wget.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Widow.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WWWOFFLE.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Xaldon\ WebSpider.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Zeus.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Semrush.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BecomeBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Screaming.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Screaming\ FrogSEO.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SEO.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*AhrefsBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*AhrefsSiteAudit.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*MJ12bot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*rogerbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*exabot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Xenu.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*dotbot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*gigabot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Twengabot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*htmlparser.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*libwww.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Python.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*perl.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*urllib.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*scan.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Curl.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*email.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*PycURL.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*Pyth.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*PyQ.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebCollector.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*WebCopy.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*webcraw.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*webcraw.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SurveyBot.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*SurveyBot_IgnoreIP.*$ [NC,OR]

RewriteCond %{HTTP_USER_AGENT} ^.*BlekkoBot.*$ [NC]

RewriteRule ^.*.* http://www.google.com/ [L]

</textarea>

thank you, just implement in htaccess right?

Anthony » Mario

use the one in pastebin for htaccess.

Mario » Anthony

thanks mate, appreciate this.

Anthony

.htaccess version is here..

https://pastebin.com/W1EhVmSa.RewriteEngine On RewriteCond %{REQUEST_URI}!/robots.txt$ RewriteCond %{HTTP_U – Pastebin.com.

PASTEBIN.COM.

RewriteEngine On RewriteCond %{REQUEST_URI}!/robots.txt$ RewriteCond %{HTTP_U – Pastebin.com.

RewriteEngine On RewriteCond %{REQUEST_URI}!/robots.txt$ RewriteCond %{HTTP_U – Pastebin.com.👍1

Buth

Does bots follow robots.txt rules, now the big question is.

Only reputable bots follow robots.txt rules. Most others just ignore it.👍1

Alexander

Are there any plugins that can do this and they get updated with new bots?

Chowdhury

You would like to block a particular bot from crawling/indexing your site by Editing robots.txt file but if you want be more secured then use this Bad Bots Protection » Sucuri.net.

Sucuri – Complete Website Security, Protection & Monitoring.

SUCURI.NET.

Sucuri – Complete Website Security, Protection & Monitoring.

Sucuri – Complete Website Security, Protection & Monitoring.👍1

I block them at Sucuri panel as well.👍1

Stevenhttps://wordpress.org/plugins/blackhole-bad-bots/

Blackhole for Bad Bots.

WORDPRESS.ORG.

Blackhole for Bad Bots.

Blackhole for Bad Bots.

Srikant

robots.txt is just an instruction to bots. Which google do not obey including others. they all crawl. If you strictly do not want any other robots crawl then you can password protect. Or you can allow certain bots in the header. You have to check triple way whether the bot is from google or any other bot is using google name. There are plenty ways.

Franz

We use Sucuri website firewall.